Most token spending comes from unnecessary context loading, bloated memory, and inefficient tool usage. Simple techniques like memory distillation and model mixing can dramatically reduce costs without sacrificing performance. Combining multiple optimizations can cut monthly expenses from $300 down to around $80 or less. Start with memory management for the biggest immediate savings, then layer on the rest for maximum impact.

Where Your Tokens Are Actually Going

Before cutting costs, it's important to understand the main sources of token consumption in OpenClaw

Every conversation starts by loading the system prompt, memory files, skill instructions, and chat history. This initial step alone can easily consume 50,000 to 100,000 tokens before the agent even reads your message

Tool calls add significant overhead too. When many tools are available, their full descriptions can add thousands of tokens to every prompt

Unmanaged memory files grow endlessly. A single 10KB memory file loaded with every message quickly becomes expensive

Long conversations also pile up fast. A thread with 50 messages can reach 200,000 tokens of context, driving costs higher with each exchange

Technique 1: Memory Distillation (Save 30–40%)

Memory distillation is one of the most effective ways to reduce token usage. The idea is to regularly summarize and compress your memory files.

Write daily logs into separate dated files (e.g., memory/YYYY-MM-DD.md)

Every few days, review these logs and extract only the important details into a concise main MEMORY.md file

Archive older logs (anything over two weeks old) so the agent no longer loads them automatically

Results: Memory size often drops from 10–20KB down to just 2–3KB. This can save 5,000–10,000 tokens per message (based on roughly 4 tokens per word)

Advanced tip: Split MEMORY.md into topic-based sections (such as contacts, projects, or preferences) and load only the relevant parts for the current task

Technique 2: Stateful Local Memory (Save 15–20%)

Instead of reloading the same information repeatedly, use local caching and smart retrieval to keep only what's needed.

Practical approaches:

Store frequently used details like project information, API keys, or workflow status in structured local files that load on demand

Apply semantic search with embedding models to pull only the most relevant memory snippets rather than the entire history

Keep essential context in small, always-loaded files while making everything else load dynamically

The key insight: Your agent doesn't need to know every detail of your life in every message — it only needs the information relevant to the current task

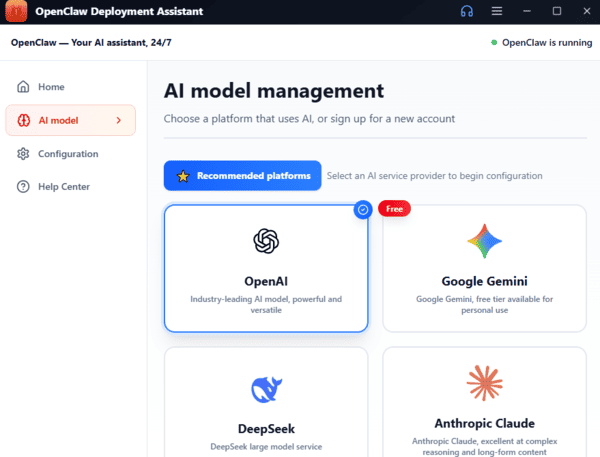

Technique 3: Model Mixing (Save 20–40%)

Not every task requires the most expensive model. Smart model selection can deliver major savings

For planning and complex reasoning, use powerful models like Claude Opus 4 or GPT-5. For routine execution and simpler tasks, switch to more affordable options such as Claude Sonnet 4.5 or GPT-5 Mini

For batch processing, consider cost-effective choices like DeepSeek V3 or even local models. Many users report 30–40% overall savings just by routing different tasks to the right model

Technique 4: Prompt Caching Optimization (Save 10–25%)

Take advantage of your AI provider's prompt caching features. Cached tokens are often 75–90% cheaper than new ones.

Best practices:

Keep your system prompt stable — changing it frequently invalidates the cache

Maintain consistent tool ordering so the prompt structure stays predictable

Place static content at the beginning of the prompt, where caching works most effectively

Expected impact: With good habits, you can achieve 50–70% cache hit rates, effectively cutting context loading costs in half

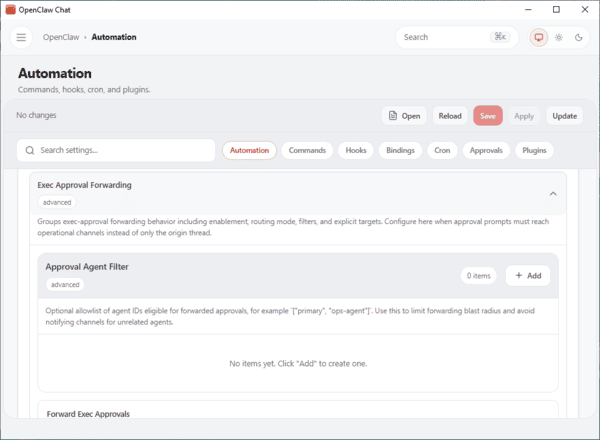

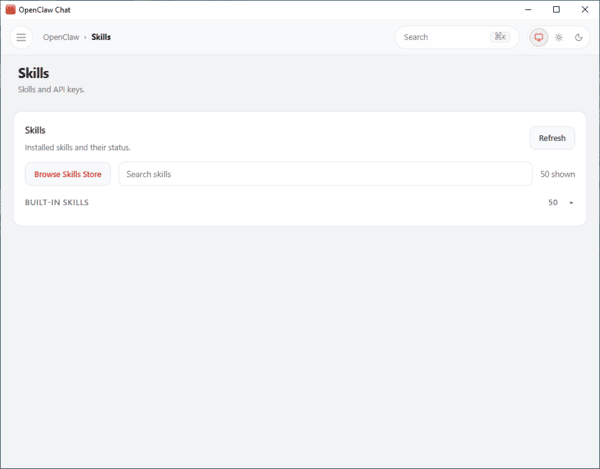

Technique 5: Skill Consolidation (Save 5–15%)

Every active skill increases prompt size. Regular cleanup keeps things lean.

Actionable steps:

Remove any skills you haven't used in the past two weeks

Merge similar skills (for example, combine separate search tools for different platforms into one unified research skill)

Configure skills to load only when triggered, rather than including them in every prompt

Real-World Savings Breakdown

Memory distillation alone can reduce costs by about 35%, bringing the monthly bill down to roughly $195. Adding stateful local memory saves another 17%, lowering it further to about $162

Model mixing then contributes around 30% in savings, bringing the cost to $113. Prompt caching optimization adds another 20% reduction, resulting in roughly $90. Finally, skill consolidation trims an extra 10%, landing at approximately $81 per month

Final Thoughts and Next Steps

Token optimization isn't about making your agent less capable — it's about making it smarter with resources

Start with memory distillation: it takes just 30 minutes and delivers the largest gains (30–40%). Then implement the other techniques one by one, prioritizing by impact

Core principle: Tokens should be spent on delivering valuable work, not on repeatedly loading irrelevant context

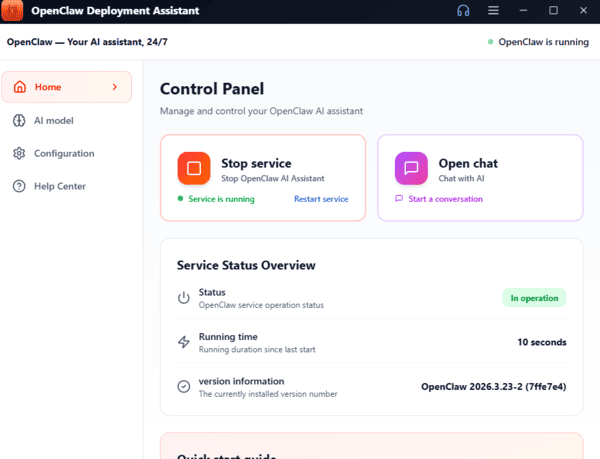

For users who want easy setup OpenClaw, OpenClawTool is a perfect choice. With one click, you can setup your OpenClaw easily

By applying memory distillation, stateful memory, model mixing, prompt caching, and skill consolidation, most users can reduce OpenClaw token costs by 60–80%. Combined with smart platform choices, what once cost hundreds per month can drop to a fraction of that — making powerful local AI both affordable and sustainable.