AI agents in 2026 are more powerful than ever, automating tasks, accessing sensitive data, and interacting with systems on your behalf. But with that power comes risk: prompt injections, data leaks, and tool misuse can all compromise security. This guide breaks down the top threats and offers practical strategies — from least-privilege access to human review and session isolation — so you can safely leverage AI agents without putting your systems or data at risk.

What AI Agent Security Really Means

AI agent security involves protecting systems where autonomous agents can interact with tools, data, and external services on your behalf. Unlike traditional applications, agents interpret instructions dynamically and may treat untrusted inputs as commands, which creates new vulnerabilities

The risks grow because agents don't just process information — they actively read files, send messages, modify data, and trigger actions across connected systems. This makes securing them more complex than protecting standard software

Teams that give agents access to internal documents, customer data, codebases, or business tools face the highest exposure

Prompt Injection and Goal Hijacking

Malicious or cleverly crafted inputs can trick the agent into ignoring its original task, revealing sensitive information, or performing unwanted actions.

Tool Misuse and Overly Broad Permissions

When agents have excessive access to email, cloud storage, messaging apps, or payment systems, small mistakes can lead to serious breaches

Many security issues stem from granting too many permissions during initial setup

Sensitive Data Leakage

Conversation history, memory files, and logs can unintentionally expose private information if not properly isolated or cleaned between sessions

Supply Chain and Tool Risks

Third-party tools, plugins, or connectors may contain vulnerabilities or malicious behavior, introducing hidden risks into your agent's workflow

Hallucinated or Unsafe Actions

The agent might misinterpret requests and confidently take incorrect or dangerous actions, especially when automating complex processes

Essential Best Practices for Securing AI Agents

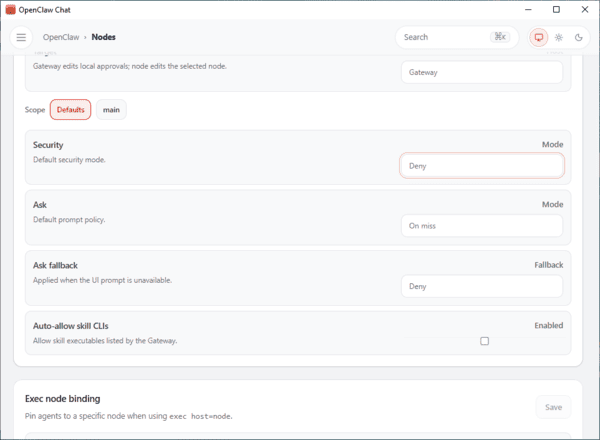

Apply Least Privilege

Give the agent only the minimum access it needs to complete its tasks

Use narrow permissions, read-only tokens when possible, and avoid sharing broad secrets

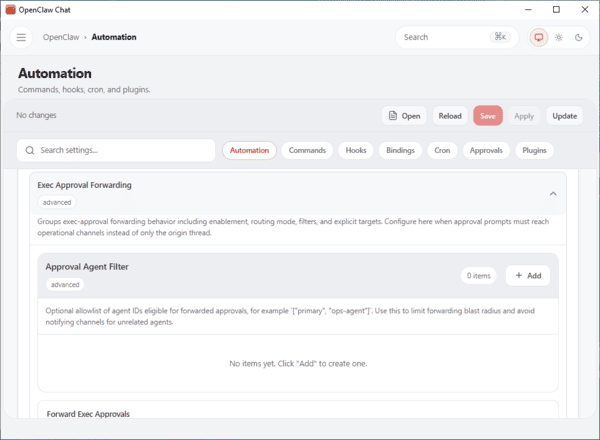

Require Human Approval for High-Risk Actions

Always include human review for sensitive operations such as sending emails, making payments, modifying important files, or sharing data

Isolate Sessions and Memory

Keep different tasks or users in separate sessions

Use sandboxes where possible and ensure memory or context does not leak between unrelated workflows

Implement Monitoring and Audit Trails

Track what the agent sees, attempts, and executes

Maintain clear logs and include emergency kill switches to stop suspicious behavior quickly

Regularly Red-Team Your Agents

Test agents with adversarial prompts, fake malicious documents, and edge cases to discover weaknesses before real attacks occur

Practical Steps to Secure Your AI Agent

Map All Access Points — List every file, tool, token, and system the agent can reach

Separate Trusted and Untrusted Content — Treat external inputs as potentially dangerous and keep core instructions isolated

Restrict External Calls and Secrets — Remove unnecessary tools and limit what the agent can access externally

Add Review Gates — Require approval before any high-impact action

Re-test After Changes — Verify security whenever you add new tools, models, or workflows

Security by Deployment Model

Self-Hosted Agents

You gain full control but also bear full responsibility for patching, monitoring, isolation, and incident response

Managed Cloud Environments

These reduce many operational security gaps through professional management, automatic updates, and built-in safeguards

While these managed cloud options sound appealing, they come with a significant downside: your data, conversations, memories, and API keys are processed and stored on third-party servers — raising serious privacy concerns even when encryption and isolated containers are used

OpenClawTool takes a completely different approach. It is designed for local deployment, allowing you to run OpenClaw directly on your own computer (Windows or macOS, ). All your data stays fully under your control — nothing is uploaded to the cloud

Final Thoughts

AI agent security in 2026 is not optional — it requires deliberate design and ongoing vigilance. By applying least privilege, maintaining human oversight, isolating components, and monitoring activity, you can safely harness the power of agents while minimizing risks.